1. 事件简述

k8s集群中,calico-node被多次重启,查看其中一个Pod的详细信息:

[root@k8s-master01 ~]# kubectl describe po -n kube-system calico-node-6sqwm

......

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning Unhealthy 20m kubelet Readiness probe failed: OCI runtime exec failed: exec failed: unable to start container process: error executing setns process: exit status 1: unknown

Warning Unhealthy 20m (x2 over 20m) kubelet Readiness probe failed: OCI runtime exec failed: exec failed: unable to start container process: read init-p: connection reset by peer: unknown

Warning Unhealthy 19m (x3 over 20m) kubelet Liveness probe failed: OCI runtime exec failed: exec failed: unable to start container process: read init-p: connection reset by peer: unknown

Warning Unhealthy 19m (x2 over 20m) kubelet Liveness probe failed: OCI runtime exec failed: exec failed: unable to start container process: error executing setns process: exit status 1: unknown

Normal Pulled 19m (x2 over 3h23m) kubelet Container image "xxxxx/xxx/calico/node:v3.28.0" already present on machine

Normal Created 19m (x2 over 3h23m) kubelet Created container calico-node

Normal Started 19m (x2 over 3h23m) kubelet Started container calico-node

Warning Unhealthy 19m (x3 over 19m) kubelet Liveness probe failed: OCI runtime exec failed: exec failed: unable to start container process: error starting setns process: fork/exec /proc/self/exe: resource temporarily unavailable: unknown

Warning Unhealthy 19m (x2 over 19m) kubelet Readiness probe failed: OCI runtime exec failed: exec failed: unable to start container process: error starting setns process: fork/exec /proc/self/exe: resource temporarily unavailable: unknown

Normal Killing 19m kubelet Container calico-node failed liveness probe, will be restarted

Warning Unhealthy 19m (x4 over 3h23m) kubelet Readiness probe failed: calico/node is not ready: BIRD is not ready: Error querying BIRD: unable to connect to BIRDv4 socket: dial unix /var/run/calico/bird.ctl: connect: connection refused从上信息可以看出,Calico Node 容器无法正常 Ready,Readiness/Liveness Probe 多次失败;同时,业务反馈有些服务模块接口超时。

2. 问题分析

2.1 核心症状

从事件 (Events) 看到:

Warning Unhealthy 20m kubelet Readiness probe failed: OCI runtime exec failed: exec failed: unable to start container process: error executing setns process: exit status 1: unknown

Warning Unhealthy 19m kubelet Liveness probe failed: OCI runtime exec failed: exec failed: unable to start container process: read init-p: connection reset by peer: unknown

...

Warning Unhealthy 19m kubelet Readiness probe failed: calico/node is not ready: BIRD is not ready: Error querying BIRD: unable to connect to BIRDv4 socket: dial unix /var/run/calico/bird.ctl: connect: connection refused解读:

- Liveness / Readiness probe 多次失败,kubelet 不认为容器健康。

- 错误信息中有

error executing setns process,这是容器运行时尝试进入网络命名空间失败的典型报错。 - BIRD(Calico 的路由守护进程)未就绪,

/var/run/calico/bird.ctlsocket 无法连接。

2.2 容器内部检查

# 查看calico-node容器内的进程

[root@k8s-master01 ~]# kubectl exec -n kube-system calico-node-6sqwm -- ps aux | grep bird

Defaulted container "calico-node" out of: calico-node, upgrade-ipam (init), install-cni (init), mount-bpffs (init)

command terminated with exit code 126

# 检查BIRD socket文件

[root@k8s-master01 ~]# kubectl exec -n kube-system calico-node-6sqwm -- ls -la /var/run/calico/

Defaulted container "calico-node" out of: calico-node, upgrade-ipam (init), install-cni (init), mount-bpffs (init)

total 0

drwxr-xr-x 3 root root 120 Mar 23 05:06 .

drwxr-xr-x 1 root root 72 Mar 23 05:06 ..

srw-rw---- 1 root root 0 Mar 23 05:06 bird.ctl

srw-rw---- 1 root root 0 Mar 23 05:06 bird6.ctl

dr-xr-xr-x 2 root root 0 Nov 5 06:14 cgroup

-rw------- 1 root root 0 Nov 5 06:15 ipam.lock

# 查看BIRD日志

[root@k8s-master01 ~]# kubectl exec -n kube-system calico-node-6sqwm -- cat /var/log/calico/bird.log

Defaulted container "calico-node" out of: calico-node, upgrade-ipam (init), install-cni (init), mount-bpffs (init)

cat: /var/log/calico/bird.log: No such file or directory

command terminated with exit code 1可以看出容器内部检查异常:

kubectl exec … ps aux | grep bird

command terminated with exit code 126

解读:

- Exit code 126 = “命令不可执行”(Permission denied 或二进制损坏)。

- 说明容器内尝试执行

bird命令时失败。 bird.log文件不存在,也说明 BIRD 没有启动或启动失败。

/var/run/calico/ 目录

srw-rw---- 1 root root 0 Mar 23 05:06 bird.ctl

srw-rw---- 1 root root 0 Mar 23 05:06 bird6.ctl

解读:

- socket 文件存在,但没有进程监听。

- BIRD 没有启动成功,所以 probe 一直失败。

ipam.lock存在,说明 IPAM 初始化可能成功,但 BIRD 启动失败阻塞 Node Ready。

OCI runtime exec / setns 错误

OCI runtime exec failed: exec failed: unable to start container process: error starting setns process: fork/exec /proc/self/exe: resource temporarily unavailable

- 这是 容器运行时底层问题,常见原因:

- 节点资源不足:尤其是 PID 数耗尽(进程 fork 失败)。

- 容器运行时/内核 bug:containerd 或 runc 与内核版本不兼容。

- 安全限制:例如 SELinux / seccomp / cgroup 配置导致无法执行 setns。

- 容器镜像损坏:可能

calico/node镜像内的 BIRD 二进制不可执行。

总结问题链

- Calico Node 启动后:

- BIRD 未能成功启动

/var/run/calico/bird.ctl无进程监听

- Probe 连续失败,kubelet 重启容器

- exec 命令失败(exit 126 / setns error),无法进入容器排查

- root cause 很可能是 BIRD 无法启动 或 容器 runtime/内核环境问题。

3. 问题排查

3.1 确认是不是“节点资源问题”

看 PID 是否耗尽:

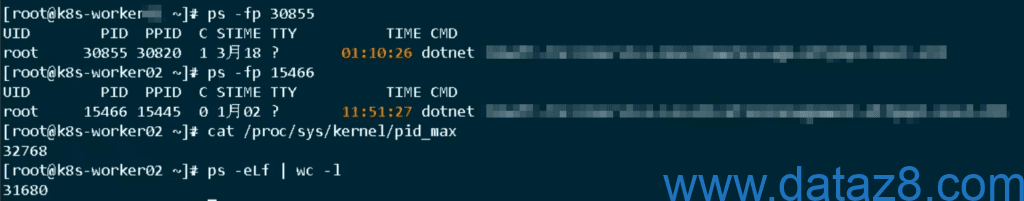

[root@k8s-worker02 ~]# cat /proc/sys/kernel/pid_max

32768

[root@k8s-worker02 ~]# ps -eLf | wc -l

31635

[root@k8s-worker02 ~]# free -h

total used free shared buff/cache available

Mem: 62G 22G 21G 4.8G 19G 35G

Swap: 0B 0B 0B根据以上信息,可以确定,不是内存问题,也不是镜像问题,而是:PID(进程数)耗尽 → 导致 calico-node 无法 fork → BIRD 启动失败。

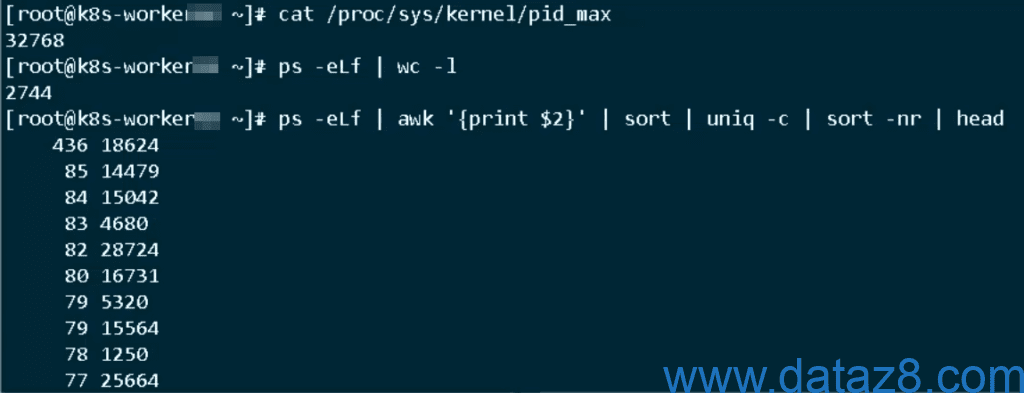

3.2 找出进程最多的服务

[root@k8s-worker02 ~]# ps -eLf | awk '{print $2}' | sort | uniq -c | sort -nr | head

21317 30855

7853 15466

262 18624

85 14479

83 4680

82 15042

81 5320

81 28724

81 13234

80 16731根因锁定(精确到进程级):

21317 30855

7853 15466

解释:

| 线程数 | 进程 PID |

|---|---|

| 21317 | 30855 |

| 7853 | 15466 |

结论:

PID=30855 这个进程一个人就占了 2.1万个线程!

再加上 PID=15466:

21317 + 7853 = 29170 线程

而系统总 PID 才:32768

两个进程吃掉了 89% 的 PID!

进一步确认进程是谁,执行:

ps -fp 30855

ps -fp 15466

或者:

cat /proc/30855/cmdline

cat /proc/15466/cmdline

为什么会这样(关键原理)

这不是普通进程,而是:

线程爆炸(Thread Explosion)

.NET 特点:

- 使用 ThreadPool

- IO / async / Timer / Socket

- 在异常情况下 → 线程无限增长

为什么会把 Calico 干死?

因为Linux 限制:

- 线程 = 进程(在 PID 视角)

- 每个线程都占一个 PID

PID 用完 → fork() 失败 就会导致:

| 组件 | 结果 |

|---|---|

| calico-node | ❌ 起不来 |

| kubelet exec | ❌ 失败 |

| containerd | ❌ setns 失败 |

| probe | ❌ 全挂 |

4. 解决方案

4.1 直接干掉罪魁祸首

kill -9 30855

kill -9 15466

或者:

pkill -9 dotnet

4.2 提升 PID 上限(防止再炸)

sysctl -w kernel.pid_max=131072

4.3 给 dotnet 加限制(必须)

resources:

requests:

cpu: 1

memory: 2Gi

limits:

cpu: 4

memory: 8Gi

禁止 BestEffort:所有 Pod 必须有 requests。

经过以上操作,问题解决,PID占用降下来:

5. 最终总结

这不是 Calico的问题:是业务进程(dotnet)线程爆炸 → 吃光 PID → 导致整个节点“无法创建新进程” → Calico 首先挂掉。